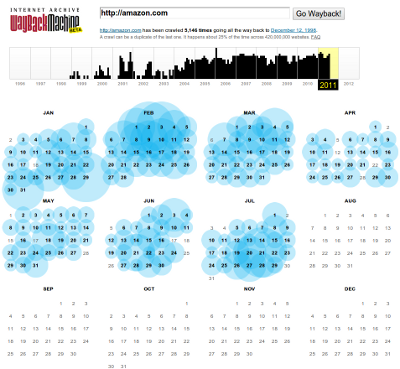

If a website is offline or restricts how quickly it can be crawled then downloading from someone else’s cache can be necessary. In previous posts I discussed using Google Translate and Google Cache to help crawl a website. Another useful source is the Wayback Machine at archive.org, which has been crawling and caching webpages since 1998.

Here are the list of downloads available for a single webpage, amazon.com:

Or to download the webpage at a certain date:

| 2011: | http://wayback.archive.org/web/2011/amazon.com |

| Jan 1st, 2011: | http://web.archive.org/web/20110101/http://www.amazon.com/ |

| Latest date: | http://web.archive.org/web/2/http://www.amazon.com/ |

The webscraping library includes a function to download webpages from the Wayback Machine. Here is an example:

from webscraping import download, xpath

D = download.Download()

url = 'http://amazon.com'

html1 = D.get(url)

html2 = D.archive_get(url)

for html in (html1, html2):

print xpath.get(html, '//title')This example downloads the same webpage directly and via the Wayback Machine. Then it parses the title to show the same webpage has been downloaded. The output when run is:

Amazon.com: Online Shopping for Electronics, Apparel, Computers, Books, DVDs & more

Amazon.com: Online Shopping for Electronics, Apparel, Computers, Books, DVDs & more